Think of your model as a student taking exams over and over to get better at a subject. In machine learning, the main goal is to build a model that learns from data. Training happens over time, similar to how a student studies for a test. The model goes through the training data many times, adjusting itself to fix mistakes each round. Each full pass through the data is called an epoch.

It is important to understand what epoch in Machine Learning, batch, and iteration mean in deep learning and how they work together. These terms describe how a machine learning model, especially a neural network, learns. The model keeps changing its weights to make fewer mistakes and get better.

This guide will explain the training loop and its main parts: what epochs are, how data is used during training, and why these ideas matter. By the end, you’ll know what epochs are and how understanding them can help you train and debug your models better.

Core Concepts in Machine Learning: Defining Epoch, Batch, and Iteration

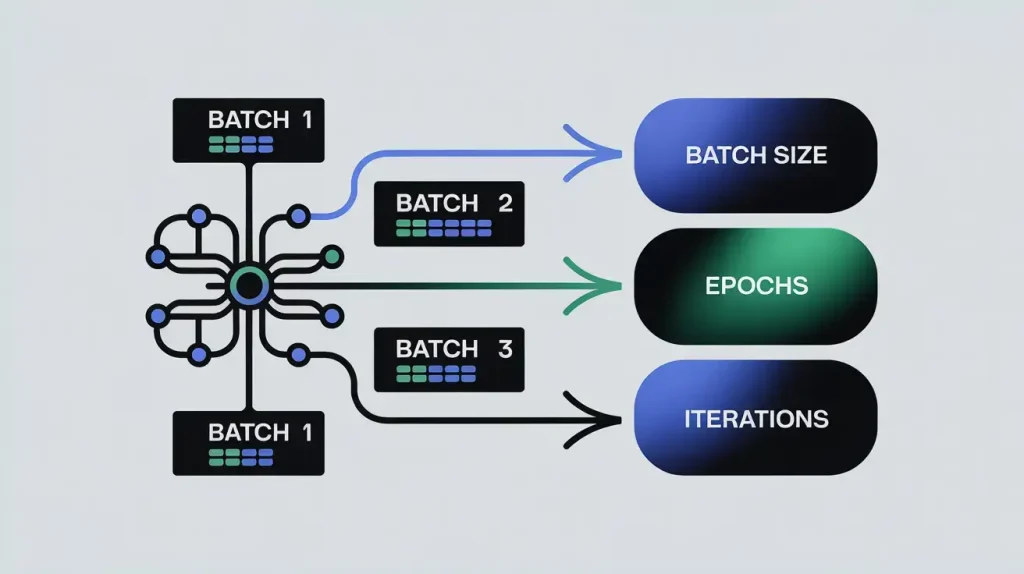

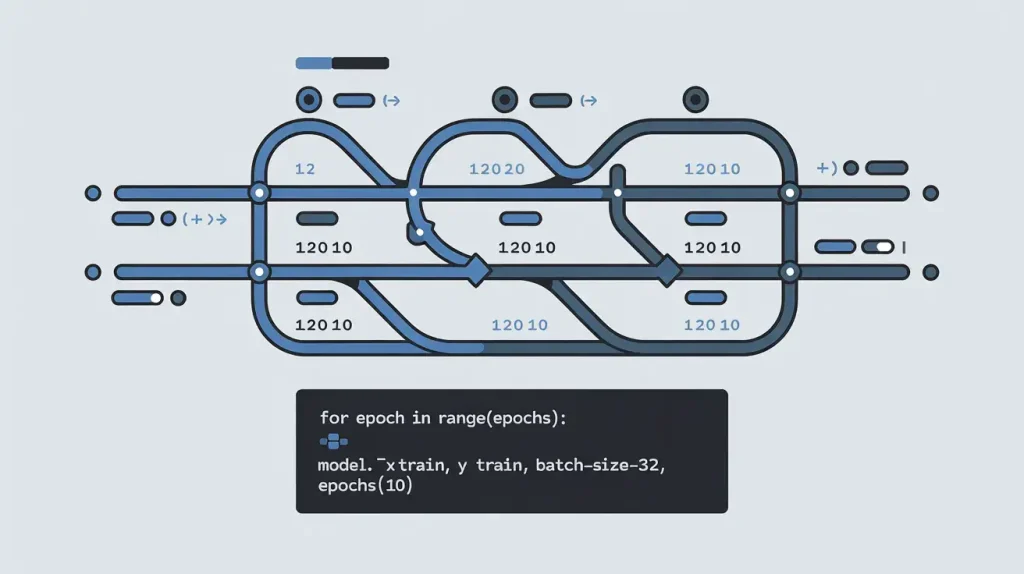

Here is how epochs, batches, and iterations relate. One epoch in Machine Learning has several iterations. Each iteration works through one batch of training data.

To understand training, focus on three key terms. Epochs mean a full pass through the data. Batches are smaller groups of data. Iterations mean processing one batch and updating the model. Mixing these up can make training confusing and affect your results.

What is an Epoch in Machine Learning? Understanding Its Role

An epoch in machine learning is when the model goes through the entire training dataset once. For example, if you have 10,000 images, one epoch means the model has seen each of those images one time.

Usually, one epoch in Machine Learning is not enough for the model to learn well. Just like a student needs to review material several times, a machine learning model needs multiple epochs to improve. With each epoch in Machine Learning, the model updates its weights, reduces errors, and gets more accurate. (Model Training and Performance Evaluation, 2024) However, it’s important to note that using too many epochs can lead the model to memorize the training data rather than learn to generalize. (Impact of Epochs on Model Training and Overfitting in Machine Learning, 2025) This memorization can cause the model to perform well on training data but poorly on new, unseen data. (Impact of Epochs on Model Performance and Overfitting, 2024, pp. 1-3) Thus, balancing the number of epochs is crucial to avoid these common pitfalls.

The number of epoch in Machine Learning you set is an important choice in machine learning. It controls how many times the model is trained on the dataset. More epochs can help the model learn better, but you need to watch for overfitting. Overfitting is when the model learns the training data too well and performs poorly on new data. (What is Overfitting?, n.d.) A good strategy for selecting the number of epochs is to start with a small number and gradually increase it until the model’s performance stops improving. This approach helps ensure that you don’t waste computational resources and time while avoiding the risk of overfitting.

In summary, understanding epochs is crucial for tuning a model’s training, helping ensure it learns from data effectively.

Understanding Batches in Machine Learning: How Data is Broken Down

With large datasets, it’s not practical to process all the data at once because of memory limits and slow learning. So, the data is split into smaller batches. For example, instead of giving the model all 10,000 images at once, you might use batches of 32 images. This way, the model works on one batch at a time. The number of samples in each batch is called the batch size, and it’s an important setting that affects training. Using batches helps save memory and often makes training faster and more effective, since the model updates its weights more often. (Devarakonda et al., 2017)

Iterations in an Epoch in Machine Learning: The Steps Within Each Pass

An iteration is when the model updates itself using one batch of data. For example, if you have 10,000 samples and a batch size of 100, it takes 100 iterations (10,000 divided by the number of training examples) to complete one epoch in Machine Learning. The model improves a bit with each iteration as it passes through the algorithm multiple times.

More: NoSQL Injection: Complete Guide to Risks & Prevention 2025

The Relationship Between Epochs, Batches, and Iterations

The relaThese three ideas—epoch in Machine Learning, batches, and iterations—fit together in a clear order: One full cycle through the entire training dataset.

- Batch: A subset of the training dataset in the context of machine learning.

- Iteration: The processing of a single batch, resulting in one model update.

Here’s a simple example to show how it all works together:

- Assume a training dataset with 2,000 training samples.

- You choose a batch size of 100.

- You decide to train the model for 1 epoch in Machine Learning.

From this, we can calculate:

- Iterations per Epoch in deep learning: Dataset Size / Batch Size = 2,000 / 100 = 20 iterations. This means the model’s weights will be updated 20 times during each epoch in Machine Learning.

- Total Iterations: Iterations per Epoch * Number of Epochs = 20 * 50 = 1,000 iterations. This is the total number of times the model’s parameters will be adjusted throughout the entire training process.

- Now, try this for yourself: calculate your own Iterations per epoch in Machine Learning by dividing your dataset size by your batch size. This hands-on step will help you see how these ideas fit your own work.

Understanding these concepts helps set up training, estimate time, and read training logs.

How Epochs Drive Model Learning: The Mechanics of Training

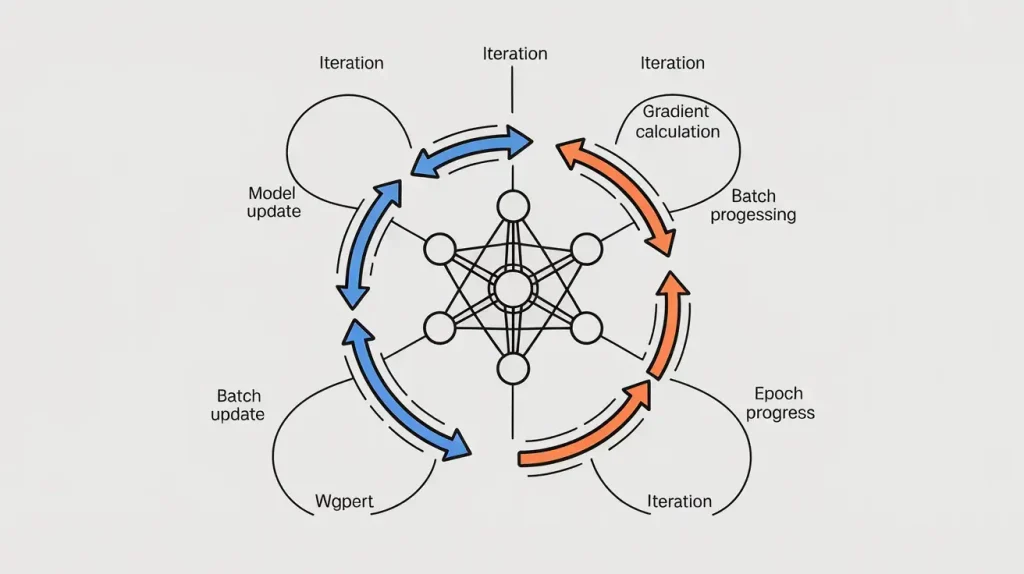

An epoch in Machine Learning is more than just a pass through the training set. It’s a cycle where the model learns and gets better. During each epoch in Machine Learning, the model does calculations that help it improve step by step. The learning algorithm, often gradient descent, changes the model’s weights to lower prediction errors. At each iteration, the model checks its predictions, calculates the loss, and uses gradients to decide how to adjust the weights. This process happens over and over. Repeating epochs helps the model get closer to the best results when training neural networks.

The gradient shows the direction of the greatest increase in loss. To reduce loss, gradient descent moves in the oppositeThe gradient points to where the loss increases the most. To reduce loss, gradient descent moves in the opposite direction. The learning rate controls how big each step is. In every iteration, you calculate gradients and update the weights to lower the loss.lled backpropagation. Backpropagation is a method used in training neural networks. The process within a single iteration looks like this in stochastic gradient descent:

- Forward Pass: A batch of data is fed into the network. The input travels through the layers, with each neuron performing its calculation, until an output (a prediction) is produced.

- Loss Calculation: The model’s predictions for the batch are compared to the true labels using a loss function (e.g., Mean Squared Error for regression, Cross-Entropy for classification). This yields a single number representing the average error for that batch.

- Backward Pass (Backpropagation): The algorithm now propagates the loss backward. It first calculates the gradient of the loss with respect to the final-layer weights, then propagates these gradients back to the input layer, layer by layer. This process uses the chain rule of calculus to efficiently determine how much each individual weight in the network contributed to the final error.

- Weight Update: Once the gradients are calculated, the optimizer (e.g., Adam, SGD) updates each weight in the network. The update rule is simple: new_weight = old_weight – (learning_rate * gradient). (Delta rule, n.d.) This adjustment moves the weights slightly closer to their optimal values.

These four steps happen in every iteration. An epoch in Machine Learning is when the model goes through the whole dataset once.

More: Best SQL Injection Scanner 2025 | Tested Vulnerability Scanners

The Cumulative Effect of Multiple Epochs

One epoch by itself doesn’t teach the model much. Over many epochs, the model goes from making random guesses to fine-tuning its weights and making better predictions. Each epoch builds on the last, slowly reducing errors.

Strategic Epoch Management in Machine Learning: Finding the Right Balance

There is no single magic number for the ideal count of epochs. It depends heavily on several factors:

- Model Complexity: More complex models, like deep neural networks with millions of weights, often require more epochs to converge.

- Dataset Size and Complexity: Larger and more complex datasets typically require more passes for the model to learn all the underlying patterns.

- Learning Rate: A smaller learning rate often necessitates more epochs in deep learning, as the model takes smaller steps toward the optimal solution during each iteration.

The aim is to train for enough epochs so the model learns the main patterns but doesn’t memorize random details. You check this by looking at how the model does on both the training data and a separate validation set. A simple rule is to stop training if the validation loss stays the same for three epochs in a row. This is a good starting point as you learn more about reading learning curves.

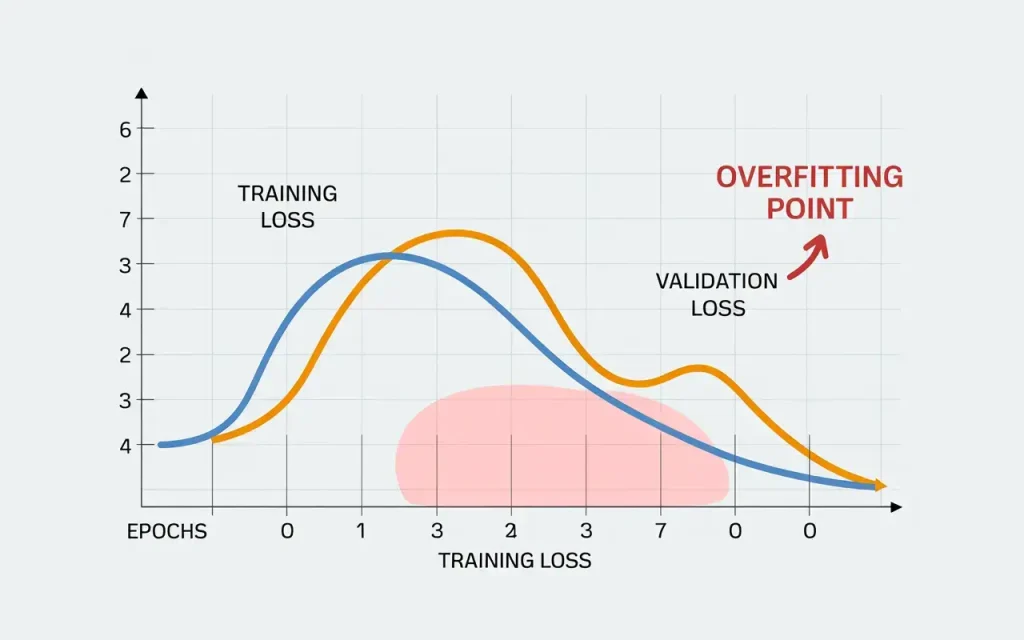

The main problem in choosing the number of epochs is balancing underfitting and overfitting.

- Underfitting: This occurs when the model is not trained for enough epoch in machine learning. The model hasn’t had sufficient opportunity to learn the underlying structure of the data as it hasn’t passed through the algorithm enough times. The result is poor performance on both the training data and new, unseen data. The model has high bias, meaning it makes overly simplistic assumptions. Symptoms include a high loss value that has not yet plateaued for both training and validation sets. The solution is often straightforward: increase the number of epoch in machine learning.

- Overfitting: This is the more insidious problem and occurs when the model is trained for too many epochs. The model begins to memorize the training data, including its noise and random fluctuations, rather than learning the generalizable patterns. While its performance on the training dataset may continue to improve (training loss decreases), its performance on the validation set will start to degrade (validation loss increases). The model has high variance and will perform poorly on new data. This is the primary danger of setting the number of epochs too high, as many epochs can lead to overfitting.

The best point is when validation loss is lowest, just before it rises. This shows the model learned general patterns without overfitting. For example, a spam email classifier trained too briefly underfits, wrongly marking real emails as spam and missing patterns. A weather model trained too long overfits, memorizing past data but failing with new conditions like unusual storms. These examples show choosing the right number of epochs matters—it can make a model generalize well or fail by underfitting or overfitting.

Visualizing Epoch Progress: Interpreting Learning Curves in Machine Learning

Relying on fixed, guesswork-based methods to determine the number of epochs is neither efficient nor reliable. A more effective approach is to observe how the model learns over time by using learning curves. Learning curves are simple but powerful tools. They show model performance over many iterations. They help you understand the training process.

A learning curve is a graph that typically shows two lines:

- Training Loss/Metric: The value of the loss function (or another performance metric like accuracy) calculated on the training data at the end of each epoch in Machine Learning.

- Validation Loss/Metric: The value of the same metric calculated on a separate validation dataset, which the model does not see during If you plot two lines on a graph—number of epochs on the x-axis and metric value on the y-axis—you can see how the model learns and generalizes as it trains.g training.

Interpreting Learning Curves: Diagnosing Model Behavior

Learning curves give you a clear picture of how your model is doing and can show if it’s underfitting, overfitting, or just right. In a good fit, both training and validation losses go down and end up close together. This means the model is learning from the training data and can handle new data well.

- Underfitting: If both the training and validation loss remain high and plateau far from zero, the model is likely underfitting. It is failing to capture the underlying patterns in the data. The learning curve shows that even with more training time (more epochs), the performance isn’t improving after several epochs to train. This might suggest the model is too simple for the task or that training needs to continue. Overfitting: This is the most common pattern to watch for. The training loss continues to decrease steadily over many epochs, approaching zero. However, the validation loss decreases for a while before beginning to rise. The point where the validation loss bottoms out and then starts to increase marks the onset of overfitting. It is no longer learning general patterns but is instead memorizing the specific training samples. The widening gap between the falling training loss and the rising validation loss is the classic signature of overfitting.

To better understand your model, ask which curve worries you more. The training curve may mean overfitting. The validation curve may mean underfitting. This helps you read graphs clearly and pick the best number of epochs to avoid extra training. Early stopping can do this automatically. It stops overfitting and saves computing power. For example, if training usually takes 100 epochs but early stopping cuts it to 60, you save about 40% of training time, reducing GPU use and costs.

More: Best Power Bank for Steam Deck: Extend Your Gaming Fun in 2025

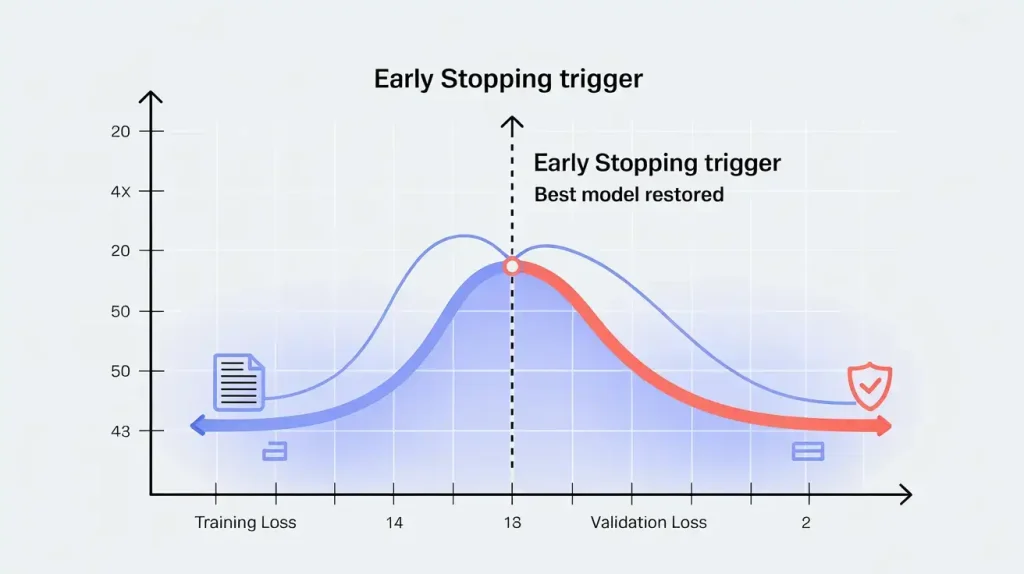

Early Stopping To Prevent Overfitting

Early stopping is a method to prevent overfitting. It helps prevent overfitting. This is useful when many epochs cause poor generalization. (Early stopping, n.d.) You set a very large number of epochs. Then, you watch the validation loss or another validation metric. The training process stops automatically when the validation loss does not improve. This happens after a set number of consecutive epochs.

The patience parameter is important. For example, you can set early stopping to end training if the validation loss does not decrease for 10 consecutive epochs. The algorithm will save the model from the epoch in Machine Learning with the best validation loss in the training examples. This way, you get the best model without having to check learning curves yourself or retrain. Early stopping is a popular and effective way to prevent overfitting, so the epoch count becomes less critical. (Liang et al., 2019) Alongside early stopping, other techniques can work in concert to optimize the training process across epochs.

- Learning Rate Schedules: Instead of using a fixed learning rate throughout training, a learning rate schedule adjusts it over time. A common approach is to start with a higher learning rate to learn quickly at first, then lower it as training goes on. (Defazio et al., 2023) This gradual decrease in loss lets the number of iterations reflect the model’s improvement. algorithm make smaller, more precise changes to the weights as the model gets closer to the best solution. Checkpointing serves two crucial purposes. First, it acts as a safeguard against system crashes or interruptions during long training runs. Second, integrated with early stopping, it allows the model to be restored from the best-performing epoch, enhancing its ability to generalize effectively.

These strategies help you manage epochs based on data instead of guessing. This makes your models stronger and more reliable.

Epochs in Practice: Real-World Scenarios and Code Examples in Machine Learning

Learning the theory is important, but seeing how epochs work in code helps even more. Modern tools like TensorFlow (with Keras) and PyTorch make it easy to set up training loops. Next, we’ll look at how epochs work in Python using these frameworks.

Let’s look at a typical Keras implementation, a high-level API for TensorFlow. The core logic is encapsulated within the model.fit() method.

import tensorflow as tf

from tensorflow.keras.models import Sequential to train machine learning models.

from tensorflow.keras.layers import Dense

# Assume X_train, y_train are your preprocessed training data

# and X_val, y_val are your validation data.

# 1. Define a simple neural network model

model = Sequential([

Dense(128, activation=’relu’, input_shape=(X_train.shape[1],)),

Dense(64, activation=’relu’),

Dense(1, activation=’sigmoid’) # For binary classification

])

# 2. Compile the model, specifying the optimizer and loss function

model.compile(optimizer=’adam’,

loss=’binary_crossentropy’ during a single epoch in Machine Learning,

metrics=[‘accuracy’])

# 3. Define hyperparameters

num_epochs = 50

batch_size = 32

# 4. Train the model using the .fit() method

history = model.fit(X_train, y_train,

epochs=num_epochs in the context of batch gradient descent,

batch_size=batch_size,

validation_data=(X_val, y_val))

# The ‘history’ object contains the training and validation loss/metrics for each epoch in Machine Learning

print(history.history.keys())

dict_keys([‘loss’, ‘accuracy’, ‘val_loss’, ‘val_accuracy’]) in the context of machine learning algorithms.

In this example, the epochs parameter tells the algorithm to go through the training data 50 times. The batch size is a hyperparameter. It can greatly affect training. (Difference Between Epoch and Batch Machine Learning, n.d.) The batch_size parameter means the data is handled in groups of 32 samples at a time. Keras takes care of shuffling, batching, and looping for you. The history object you get back is useful for plotting the learning curves we discussed earlier.

More: AMD Ryzen 9 9950X3D Gaming Performance Is Impressive

Case Study: Training a Neural Network for Image Classification

Imagine you train a convolutional neural network (CNN). It classifies images from the CIFAR-10 dataset. This dataset contains 50,000 training samples.

You set the batch size to 64. This means there will be 50,000 divided by 64, or 782 iterations in each epoch in Machine Learning. At each of these 782 steps, the model processes 64 images, computes the loss, and updates its weights using backpropagation.

You decide to start with 100 epochs. You use early stopping with a patience of 10. You watch the validation accuracy.

- Epochs 1-20: Both training and validation accuracy rise quickly. The model learns the basic features of the images.

- Epochs 21-65: Both metrics continue to improve, but more slowly. The model is fine-tuning its parameters.

- Epoch 65: The validation accuracy reaches its peak at 91.5%.

- Epochs 66-75: The training accuracy keeps inching up, but the validation accuracy hovers around 91.3%-91.4% and never surpasses the peak at epoch 65.

- After epoch in Machine Learning 75: Because the validation accuracy has not improved for 10 epochs (66 through 75), the early stopping mechanism triggers, halting the training.

The system automatically restores the model’s weights from epoch in Machine Learning 65. This gives you the best version without spending time on the last 25 planned epochs, which could have caused overfitting in the context of machine learning.

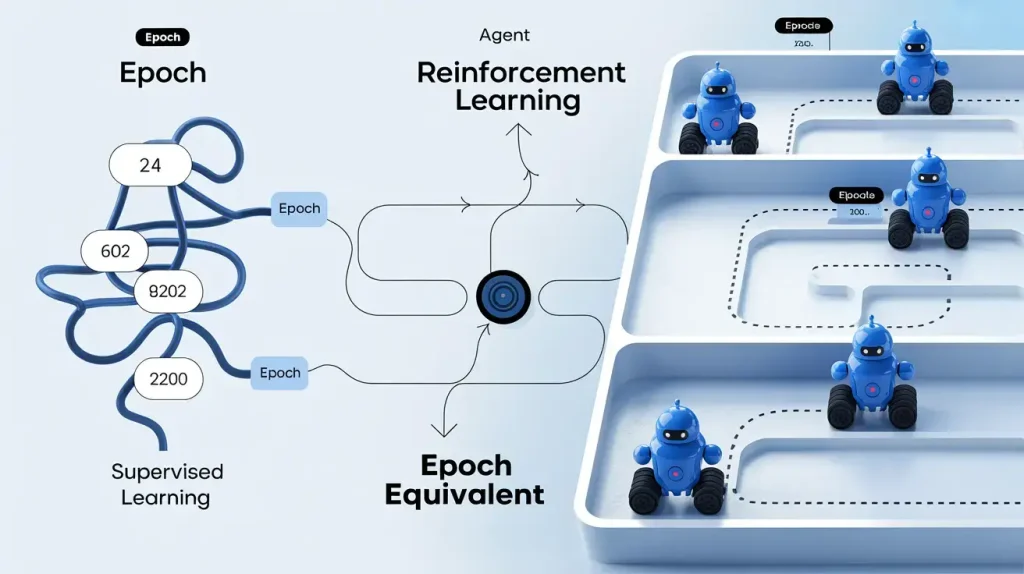

The idea of an epoch in Machine Learning is clearest in supervised learning with a fixed training dataset. Similar ideas exist in other types of machine learning. The main principle is that models improve through repeated experience, a common feature across all areas of AI.

Reinforcement Learning: Episodes as Analogous to Epochs

In Reinforcement Learning, an agent learns by interacting with an environment. The agent tries to get the highest total reward. (A4.3.6 Describe how an agent learns to make decisions by interacting with its environment in reinforcement learning. (HL only) | Computer Science KB, n.d.) There is no static dataset to pass through. Instead, the agent’s experience is collected in episodes.

An episode is a full run of a task, from start to finish. For example, in a game, an episode is a complete playthrough from the start to the ‘Game Over’ screen. The agent learns from the actions and rewards it gets during each episode. A group of episodes used for a policy update is similar to a batch. Learning over many episodes is like training for many epoch in Machine Learning. Both involve learning step by step from experience to get better.

More: Frames in Artificial Intelligence: Mastering Knowledge Representation

Other Areas: Transfer Learning and Fine-tuning

In transfer learning, you take a pre-trained model. This model was trained for many epoch in Machine Learning on a large dataset like ImageNet. You then change it for a new, specific task. (Dukler et al., 2023) The process of adapting this model is called fine-tuning.

During fine-tuning, you keep training the model on your smaller, task-specific dataset. You still choose the number of epochs for this step. Because the model’s weights are already well-tuned, you usually need fewer epochs in machine learning than if you started from scratch. Often, just a few epochs are enough to adjust the pre-trained features to the new data without losing what the model learned before. The main idea is the same: each epoch in Machine Learning represents a full pass over the new data to help the model improve.

The epoch in Machine Learning is a basic part of how machine learning models are trained. It sets the pace for how a model learns, turning the goal of minimizing loss into a clear, step-by-step process called batch processing. An epoch in Machine Learning is one full pass over the training set, letting the algorithm see every example and update its weights.

An epoch in Machine Learning isn’t just a single event. It’s made up of smaller steps. Batches break the data into smaller parts, and the model goes through each batch one by one. In each step, the model calculates gradients and updates its settings. This setup makes it possible to train large models efficiently.

Mastering epoch management means finding the right balance. You want to avoid underfitting and overfitting when training machine learning models. If you use too few epochs, the model stays untrained and may not have completed an epoch in Machine Learning. If you use too many, it memorizes the data and cannot handle new information. Here are the main points for effective training: dataset size, batch size, and iterations to configure your training loop intelligently.

To solidify these concepts, why not run a small experiment today? Try altering the batch size or the number of epochs and observe how the learning curve responds. This hands-on experiment will help you turn theory into practice. It will help you improve by exploring carefully.

- Visualize and monitor your model by using learning curves to check its behavior. If the training and validation losses start to move apart, that’s a key sign of overfitting.

- Automate your training by using advanced methods like early stopping to find the best training length, save time, and prevent overfitting. Combine this with model checkpointing so you always keep your best model.

When you treat the number of epoch in Machine Learning as an important setting and use these strategies, you stop guessing and start using data to guide you. This careful approach helps you build strong machine learning models. These models solve real problems and train effectively.